ChatGPT Passes Radiology Board Exam

Released: May 16, 2023

At A Glance

- ChatGPT (GPT-4) passed a radiology board-style exam, correctly answering 81% of questions.

- GPT-4 demonstrated marked improvement in advanced reasoning.

- ChatGPT used confident language consistently, even when incorrect, making it dangerous as a sole information source, especially for novices who may not recognize confident responses as inaccurate.

- RSNA Media Relations

1-630-590-7762

media@rsna.org - Linda Brooks

1-630-590-7738

lbrooks@rsna.org - Imani Harris

1-630-481-1009

iharris@rsna.org

OAK BROOK, Ill. — The latest version of ChatGPT passed a radiology board-style exam, highlighting the potential of large language models but also revealing limitations that hinder reliability, according to two new research studies published in Radiology, a journal of the Radiological Society of North America (RSNA).

ChatGPT is an artificial intelligence (AI) chatbot that uses a deep learning model to recognize patterns and relationships between words in its vast training data to generate human-like responses based on a prompt. But since there is no source of truth in its training data, the tool can generate responses that are factually incorrect.

"The use of large language models like ChatGPT is exploding and only going to increase," said lead author Rajesh Bhayana, M.D., FRCPC, an abdominal radiologist and technology lead at University Medical Imaging Toronto, Toronto General Hospital in Toronto, Canada. "Our research provides insight into ChatGPT's performance in a radiology context, highlighting the incredible potential of large language models, along with the current limitations that make it unreliable."

ChatGPT was recently named the fastest growing consumer application in history, and similar chatbots are being incorporated into popular search engines like Google and Bing that physicians and patients use to search for medical information, Dr. Bhayana noted.

To assess its performance on radiology board exam questions and explore strengths and limitations, Dr. Bhayana and colleagues first tested ChatGPT based on GPT-3.5, currently the most commonly used version. The researchers used 150 multiple-choice questions designed to match the style, content and difficulty of the Canadian Royal College and American Board of Radiology exams.

The questions did not include images and were grouped by question type to gain insight into performance: lower-order (knowledge recall, basic understanding) and higher-order (apply, analyze, synthesize) thinking. The higher-order thinking questions were further subclassified by type (description of imaging findings, clinical management, calculation and classification, disease associations).

The performance of ChatGPT was evaluated overall and by question type and topic. Confidence of language in responses was also assessed.

The researchers found that ChatGPT based on GPT-3.5 answered 69% of questions correctly (104 of 150), near the passing grade of 70% used by the Royal College in Canada. The model performed relatively well on questions requiring lower-order thinking (84%, 51 of 61), but struggled with questions involving higher-order thinking (60%, 53 of 89). More specifically, it struggled with higher-order questions involving description of imaging findings (61%, 28 of 46), calculation and classification (25%, 2 of 8), and application of concepts (30%, 3 of 10). Its poor performance on higher-order thinking questions was not surprising given its lack of radiology-specific pretraining.

GPT-4 was released in March 2023 in limited form to paid users, specifically claiming to have improved advanced reasoning capabilities over GPT-3.5.

In a follow-up study, GPT-4 answered 81% (121 of 150) of the same questions correctly, outperforming GPT-3.5 and exceeding the passing threshold of 70%. GPT-4 performed much better than GPT-3.5 on higher-order thinking questions (81%), more specifically those involving description of imaging findings (85%) and application of concepts (90%).

The findings suggest that GPT-4's claimed improved advanced reasoning capabilities translate to enhanced performance in a radiology context. They also suggest improved contextual understanding of radiology-specific terminology, including imaging descriptions, which is critical to enable future downstream applications.

"Our study demonstrates an impressive improvement in performance of ChatGPT in radiology over a short time period, highlighting the growing potential of large language models in this context," Dr. Bhayana said.

GPT-4 showed no improvement on lower-order thinking questions (80% vs 84%) and answered 12 questions incorrectly that GPT-3.5 answered correctly, raising questions related to its reliability for information gathering.

"We were initially surprised by ChatGPT's accurate and confident answers to some challenging radiology questions, but then equally surprised by some very illogical and inaccurate assertions," Dr. Bhayana said. "Of course, given how these models work, the inaccurate responses should not be particularly surprising."

ChatGPT's dangerous tendency to produce inaccurate responses, termed hallucinations, is less frequent in GPT-4 but still limits usability in medical education and practice at present.

Both studies showed that ChatGPT used confident language consistently, even when incorrect. This is particularly dangerous if solely relied on for information, Dr. Bhayana notes, especially for novices who may not recognize confident incorrect responses as inaccurate.

"To me, this is its biggest limitation. At present, ChatGPT is best used to spark ideas, help start the medical writing process and in data summarization. If used for quick information recall, it always needs to be fact-checked," Dr. Bhayana said.

"Performance of ChatGPT on a Radiology Board-style Examination: Insights into Current Strengths and Limitations" and "GPT-4 in Radiology: Improvements in Advanced Reasoning." Collaborating with Dr. Bhayana were Satheesh Krishna, M.D., and Robert R. Bleakney, M.D.

In 2023, Radiology is celebrating its 100th anniversary with 12 centennial issues, highlighting Radiology's legacy of publishing exceptional and practical science to improve patient care.

Radiology is edited by Linda Moy, M.D., New York University, New York, N.Y., and owned and published by the Radiological Society of North America, Inc. (https://pubs.rsna.org/journal/radiology)

RSNA is an association of radiologists, radiation oncologists, medical physicists and related scientists promoting excellence in patient care and health care delivery through education, research and technologic innovation. The Society is based in Oak Brook, Illinois. (RSNA.org)

For patient-friendly information on professions in radiology, visit RadiologyInfo.org.

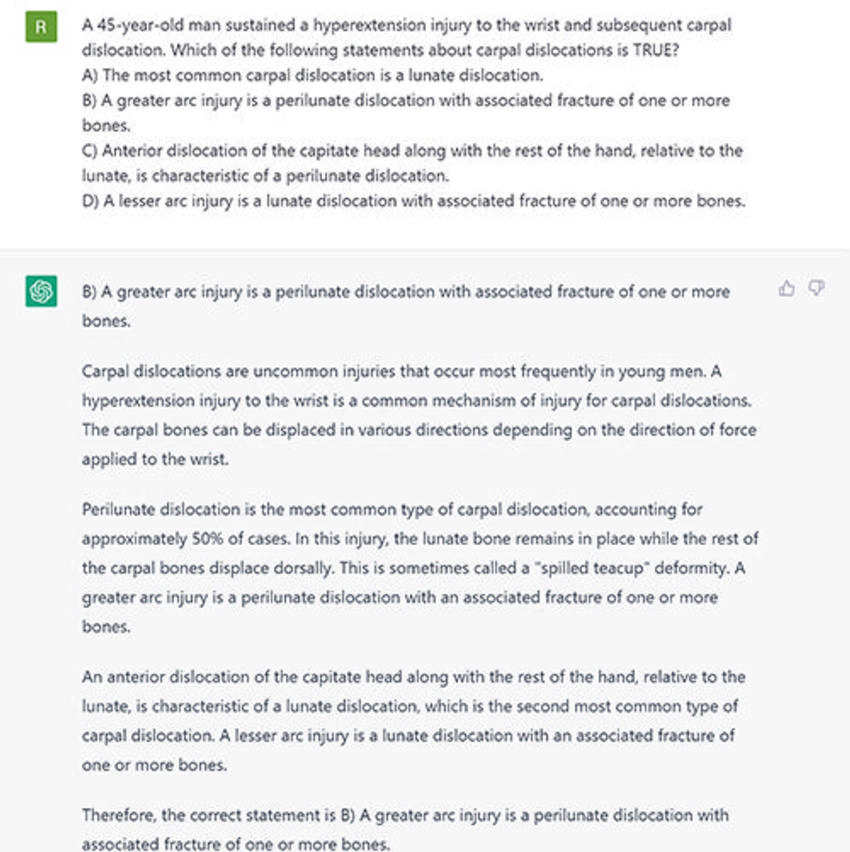

Figure 1. ChatGPT response to a question on carpal dislocations, primarily assessing lower-order thinking (knowledge recall, basic understanding). ChatGPT picked the correct answer (option B). However, its explanation included inaccuracies: perilunate dislocations are most common and are two to three times more common than lunate dislocations, not 50% of cases; anterior dislocation of the capitate is seen with perilunate dislocation, but characteristically the dislocation is posterior; lesser arc injuries are pure ligamentous disruptions without fracture.

High-res (TIF) version

(Right-click and Save As)

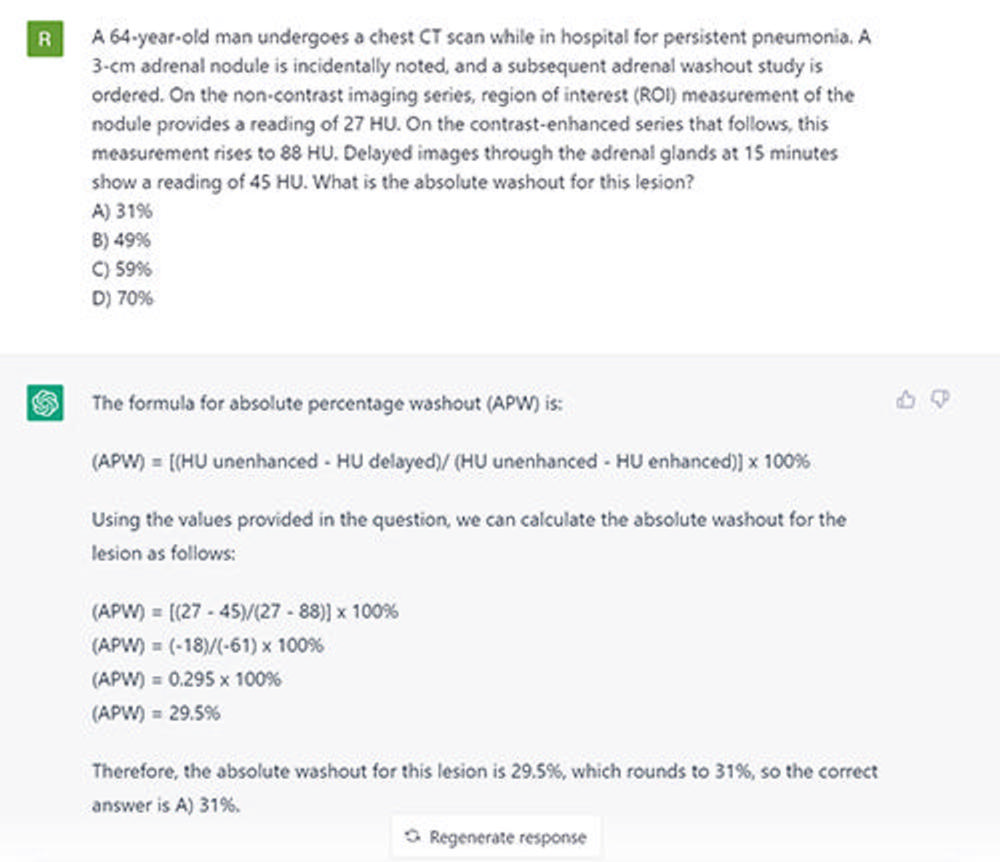

Figure 2. ChatGPT response to a higher-order thinking question involving calculation of absolute washout in an adrenal nodule. The ChatGPT response included an inaccurate absolute washout formula. Subsequent calculation and answer (option A) were incorrect. The correct formula for absolute washout is as follows: (portal venous phase attenuation − delayed phase attenuation)/(portal venous phase attenuation − nonenhanced attenuation) x 100. When the correct values from the question are included, the calculation is as follows: (88 – 45)/(88 – 27) x 100. The absolute washout in the nodule described is 70% (correct answer is D).

High-res (TIF) version

(Right-click and Save As)

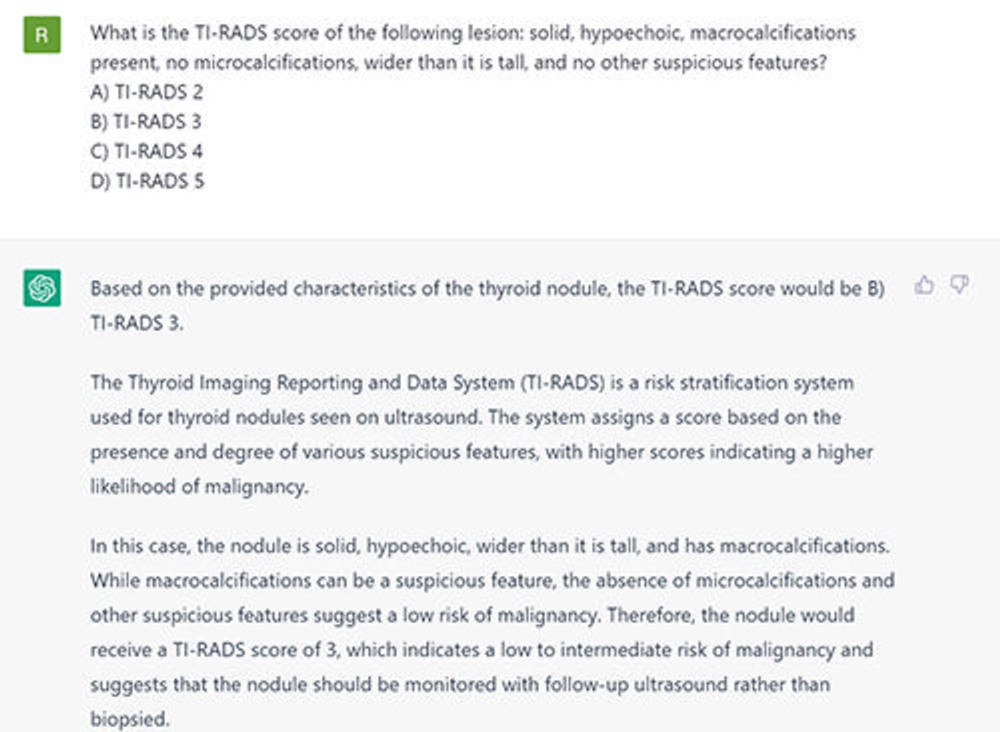

Figure 3. ChatGPT response to a classification question involving the Thyroid Imaging Reporting and Data System (TI-RADS). The model selected the incorrect answer (option B, TI-RADS 3). Since the lesion is solid (2 points), hypoechoic (2 points), and has macrocalcifications (1 point), this corresponds to a TI-RADS 4 lesion (correct answer is option C).

High-res (TIF) version

(Right-click and Save As)

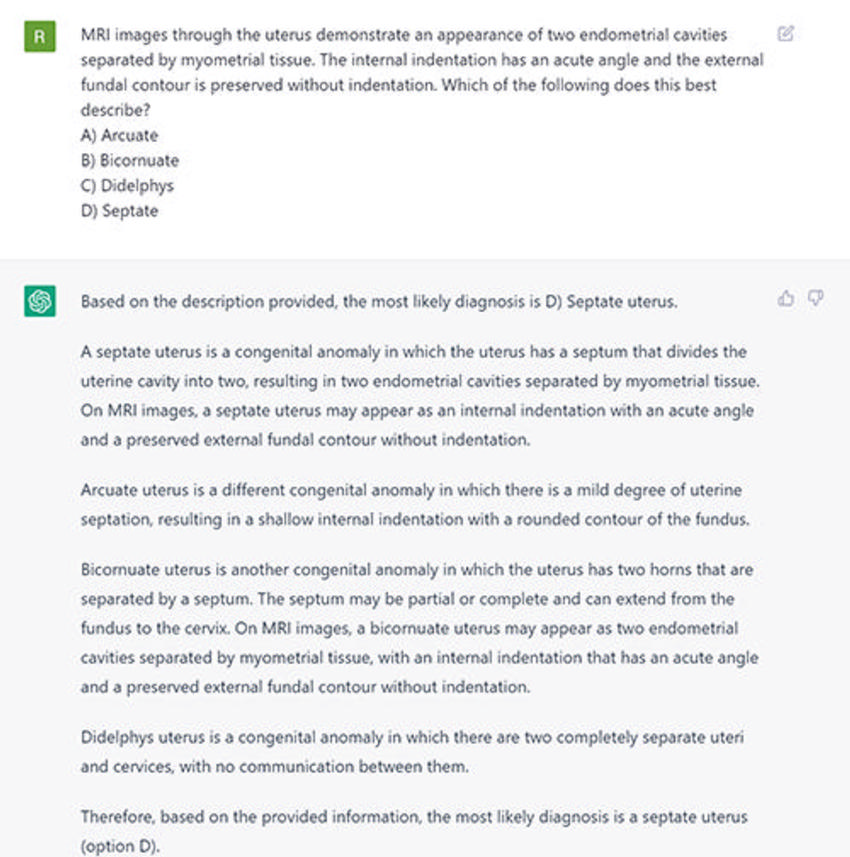

Figure 4. ChatGPT response to a question predominantly featuring a description of imaging findings. The question describes the classic appearance of a septate uterus. ChatGPT selected the correct answer (option D). The explanations are largely accurate, but its description of bicornuate uterus is inaccurate. Specifically, it indicates that the bicornuate uterus has a “preserved external fundal contour without indentation.” Bicornuate uterus is best differentiated from septate uterus by identifying an external fundal indentation greater than 1 cm.

High-res (TIF) version

(Right-click and Save As)